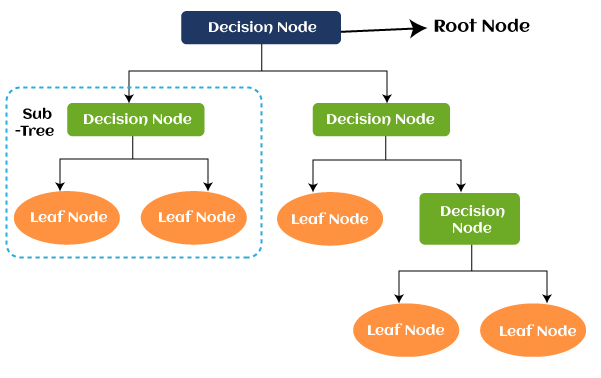

Since there is no way of determining the outcome in this particular example, the entropy is the highest possible.Ĭoming to the same example, if the plate only had “we are open” written on both of its sides, then the entropy can be predicted very well since we know already that either keeping on the front side or the backside, we are still going to have “we are open.” In other words, it has no randomness, meaning the entropy is zero. The probability of “we are open” is 0.5, and the probability of “we are closed” is 0.5. The data of this root node is further partitioned or classified into subsets that contain homogeneous instances.įor example, consider a plate used in cafes having “we are open” written on the one side and “we are closed” on the other side. The decision tree is built in a top-down manner and starts with a root node. In simple terms, it predicts a certain event by measuring the purity. Since we are more interested in knowing about decision tree entropy in the current article, let us first understand the term Entropy and get it simplified with an example.Įntropy: For a finite set S, Entropy, also called Shannon Entropy, is the measure of the amount of randomness or uncertainty in the data. The ID3 algorithm on every iteration goes through an unused attribute of the set and calculates the Entropy H(s) or Information Gain IG(s). This is where decision tree entropy comes into the frame. Decision trees can be constructed using many algorithms however, ID3 or Iterative Dichotomiser 3 Algorithm is the best one. Now that you are completely aware of the decision tree and its type, we can get into the depths of it. In regression trees, the outcome variable or the decision is continuous, e.g., a letter like ABC. Earn Masters, Executive PGP, or Advanced Certificate Programs to fast-track your career. Enrol for the Machine Learning Course from the World’s top Universities.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed